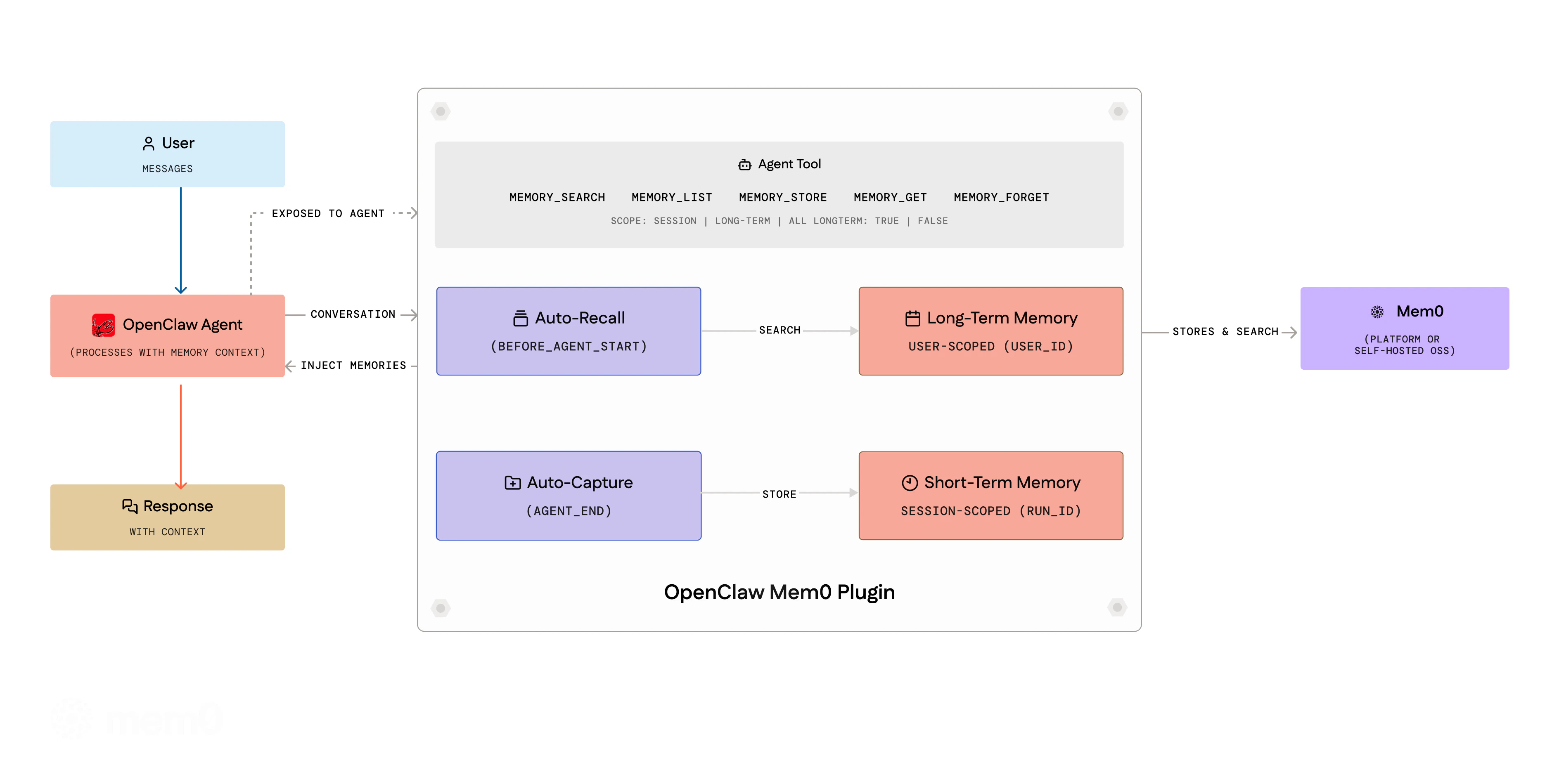

@mem0/openclaw-mem0 plugin. Your agent forgets everything between sessions — this plugin fixes that by automatically watching conversations, extracting what matters, and bringing it back when relevant.

Overview

- Triage — The agent extracts durable facts from conversations using a structured protocol with importance gates and domain overlays

- Recall — Before each turn, relevant memories are retrieved with reranking and injected into context

- Dream — Periodic memory consolidation: merges duplicates, resolves conflicts, prunes stale entries

- Agent Tools — Eight tools for explicit memory operations during conversations

autoRecall, and autoCapture are all enabled by default during openclaw mem0 init.

Requirements

Check your OpenClaw version:| OpenClaw Version | Plugin Support |

|---|---|

>= 2026.4.25 | Fully supported |

Installation

The fastest way is to install directly from your OpenClaw chat, no CLI or config editing needed. Copy and paste this into your OpenClaw chat; Telegram, WhatsApp, default chat, or any channel where your agent lives:Setup and Configuration

Understanding userId

The userId field is a string you choose to uniquely identify the user whose memories are being stored. It is not something you look up in the Mem0 dashboard — you define it yourself.

Pick any stable, unique identifier for the user. Common choices:

- Your application’s internal user ID (e.g.

"user_123","[email protected]") - A UUID (e.g.

"550e8400-e29b-41d4-a716-446655440000") - A simple username (e.g.

"alice")

userId — different values create separate memory namespaces. If you don’t set it, it defaults to your OS username.

Platform Mode (Mem0 Cloud)

There are two ways to set up@mem0/openclaw-mem0 on the Mem0 platform:

- Chat setup (recommended) — run the setup inside any OpenClaw chat. No config editing, no API key handling.

- Manual config — edit

openclaw.jsondirectly.

Option 1: Chat Setup (Recommended)

You no longer need manual config editing to get started. Everything happens inside the OpenClaw chat itself.Send the setup command to your OpenClaw agent

Open any OpenClaw channel — Telegram, WhatsApp, your default chat, wherever your agent lives. Paste and send this command:OpenClaw responds with a Mem0 setup card and immediately asks:

“What’s your email address? I’ll send you a verification code to connect your Mem0 account.”

Enter your email

Type your email address and send it. Mem0 sends back:

“Check your email for a 6-digit code and paste it here.”

The chat flow uses the same underlying config as manual setup — it writes

apiKey, userId, and skills config into openclaw.json for you. You can still open the file to inspect or override values afterward.Option 2: Manual Config

Get your API key

Get your API key from app.mem0.ai.

Open-Source Mode (Self-hosted)

No Mem0 key needed. Defaults use OpenAI (gpt-5-mini for LLM, text-embedding-3-small for embeddings) — requires OPENAI_API_KEY. For a fully local setup, use Ollama for both.

Option 1: Interactive Wizard (Recommended)

Run the guided 4-step wizard:LLM provider

Choose OpenAI (

gpt-5-mini), Ollama (llama3.1:8b, fully local), or Anthropic (claude-sonnet-4-5-20250514). Provide an API key or base URL as needed.Embedding provider

Choose OpenAI (

text-embedding-3-small) or Ollama (nomic-embed-text, local). If the same provider was chosen for LLM, the API key and URL are reused automatically.Vector store

Choose Qdrant (

http://localhost:6333) or PGVector (PostgreSQL). Connectivity is verified before proceeding.Option 2: Non-Interactive Setup

For CI/CD, scripts, or agent-driven setup — pass all options as flags:--json for machine-readable output (useful when an LLM agent is driving the setup).

All --oss-* flags

All --oss-* flags

| Flag | Description |

|---|---|

--oss-llm <provider> | openai, ollama, or anthropic |

--oss-llm-key <key> | API key for LLM provider |

--oss-llm-model <model> | Override default LLM model |

--oss-llm-url <url> | Base URL (Ollama only) |

--oss-embedder <provider> | openai or ollama |

--oss-embedder-key <key> | API key for embedder |

--oss-embedder-model <model> | Override default embedder model |

--oss-embedder-url <url> | Base URL (Ollama only) |

--oss-vector <provider> | qdrant or pgvector |

--oss-vector-url <url> | Qdrant server URL (default: http://localhost:6333) |

--oss-vector-host <host> | PGVector host |

--oss-vector-port <port> | PGVector port |

--oss-vector-user <user> | PGVector user |

--oss-vector-password <pw> | PGVector password |

--oss-vector-dbname <db> | PGVector database name |

--oss-vector-dims <n> | Override embedding dimensions |

Option 3: Manual Config

Minimal config — uses OpenAI defaults:oss fields are optional. See Mem0 OSS docs for available providers.

Short-term vs Long-term Memory

Memories are organized into two scopes:-

Session (short-term) — Auto-capture stores memories scoped to the current session via Mem0’s

run_id/runIdparameter. These are contextual to the ongoing conversation. -

User (long-term) — The agent can explicitly store long-term memories using the

memory_addtool (withlongTerm: true, the default). These persist across all sessions for the user.

Agent Tools

The agent gets eight tools it can call during conversations:| Tool | Description |

|---|---|

memory_search | Search memories by natural language query. Supports scope, categories, filters. |

memory_add | Store facts. Accepts text or facts array, category, importance, metadata. |

memory_get | Retrieve a single memory by ID |

memory_list | List all memories. Filter by userId, agentId, scope. |

memory_update | Update a memory’s text in place. Preserves history. |

memory_delete | Delete by memoryId, query (search-and-delete), or all: true. |

memory_event_list | List recent background processing events (platform mode only). |

memory_event_status | Get status of a specific event by ID (platform mode only). |

memory_search and memory_list tools accept a scope parameter ("session", "long-term", or "all") to control which memories are queried.

CLI Commands

All commands support--json for machine-readable output — useful when an LLM agent drives the CLI programmatically. Run openclaw mem0 help --json to discover every command and flag.

Configuration Options

General Options

| Key | Type | Default | Description |

|---|---|---|---|

mode | "platform" | "open-source" | "platform" | Which backend to use |

userId | string | OS username | Scope memories per user |

autoRecall | boolean | true | Inject memories before each turn. Ignored when skills is configured. |

autoCapture | boolean | true | Store facts after each turn. Ignored when skills is configured. |

topK | number | 5 | Max memories per recall |

searchThreshold | number | 0.3 | Min similarity (0–1) |

Platform Mode Options

| Key | Type | Default | Description |

|---|---|---|---|

apiKey | string | — | Required. Mem0 API key (supports ${MEM0_API_KEY}) |

customInstructions | string | (built-in) | Extraction rules — what to store, how to format |

customCategories | object | (12 defaults) | Category name → description map for tagging |

Open-Source Mode Options

| Key | Type | Default | Description |

|---|---|---|---|

customInstructions | string | (built-in) | Extraction prompt for memory processing |

oss.embedder.provider | string | "openai" | Embedding provider ("openai", "ollama", etc.) |

oss.embedder.config | object | — | Provider config: apiKey, model, baseURL |

oss.vectorStore.provider | string | "memory" | Vector store ("memory", "qdrant", "chroma", etc.) |

oss.vectorStore.config | object | — | Provider config: host, port, collectionName, dimension |

oss.llm.provider | string | "openai" | LLM provider ("openai", "anthropic", "ollama", etc.) |

oss.llm.config | object | — | Provider config: apiKey, model, baseURL, temperature |

oss.historyDbPath | string | — | SQLite path for memory edit history |

oss.disableHistory | boolean | false | Disable memory edit history tracking |

oss is optional — defaults use OpenAI embeddings (text-embedding-3-small), in-memory vector store, and OpenAI LLM (gpt-5-mini).

Plugin Management

Updating the Plugin

Checking Plugin Status

Troubleshooting

”plugins.allow excludes mem0” Error

If you see an error like:mem0 to your plugins.allow list in openclaw.json:

Plugin Not Activating

If the plugin installs but doesn’t work:- Verify

plugins.slots.memoryis set to"openclaw-mem0"(not the npm package name) - Check

openclaw plugins list --enabledto confirm the plugin is loaded - Run

openclaw mem0 statusto verify configuration

Plugin Update Not Working

Ifopenclaw plugins update fails:

- Use the plugin ID:

openclaw plugins update openclaw-mem0 - Update all plugins at once:

openclaw plugins update --all - If that fails, uninstall and reinstall:

Privacy & Security

Data Flow

| Mode | Where data goes | Storage |

|---|---|---|

| Platform | Conversations sent to api.mem0.ai for extraction and storage | Mem0 cloud |

| Open-source | Embeddings generated via configured provider (default: OpenAI API). Vectors stored locally. | ~/.mem0/vector_store.db (SQLite) |

Auto-Capture and Auto-Recall

Auto-capture and auto-recall are enabled by default. When skills mode is configured (the default afteropenclaw mem0 init), these are ignored in favor of the skills-based triage/recall/dream protocol.

To disable either:

memory_add, memory_search, etc.) explicitly regardless of these settings.

Credential Protection

The plugin never stores API keys, tokens, or secrets as memories. Five independent layers enforce this:- Triage gate — The extraction prompt rejects values matching known credential patterns (

sk-,m0-,ghp_,AKIA,Bearer,password=,token=,secret=) - Dream cleanup — Periodic memory consolidation deletes any memories that slipped through containing credential patterns

- Extraction instructions — Default extraction rules explicitly instruct the model to store only that a credential was configured, never the value

- Configurable patterns — Add custom credential patterns via

skills.triage.credentialPatterns - CLI redaction —

openclaw mem0 config showredacts sensitive fields (apiKey,oss.*.config.apiKey)

API Key Storage

Plugin config is stored in~/.openclaw/openclaw.json with file permissions 0o600 (owner-read-only). For production deployments, use environment variable references (${MEM0_API_KEY}) or SecretRef objects instead of plaintext keys.

Telemetry

Anonymous usage telemetry (PostHog) is enabled by default to help improve the plugin. No conversation content or memory values are included — only event counts (recall, capture, tool usage, CLI commands). To opt out, set the environment variable:System Prompt Context

The plugin injects memory-related instructions into the agent’s system context via OpenClaw’sprependSystemContext mechanism. This includes the memory triage protocol and recalled memories. This is the standard OpenClaw plugin SDK pattern for memory backends — no user-facing prompts are modified.

OpenAI Agents SDK

Build agents with OpenAI’s SDK and Mem0

LangGraph Integration

Create stateful agent workflows with memory